Voxel51 employees share about the company’s culture, values, and mission, as well as the opportunities for growth and development within the organization. From an open source project to an enterprise product, Voxel51’s visual AI is used worldwide in academic research labs, startups, and Fortune 10 companies. A fully-remote Series B startup, they are building a platform that empowers machine learning teams to create more accurate, less biased AI across a number of exciting fields (healthcare, security, self-driving cars).

VOXEL51 IS HIRING!

Please consider applying at the links above!

Got questions? You can email recruiter Remy (remy@voxel51.com) or connect with Remy on LinkedIn, and/or email VPE Josh (josh@voxel51.com) or connect with him on LinkedIn.

From an open source project to an enterprise product, Voxel51’s visual AI is used worldwide in academic research labs, startups, and Fortune 10 companies. The engineering team is growing!

TRANSCRIPT

Remy Schor: I’ll start by just offering some basics about who we are. Series B, we raised our series B last April. Foundationally, we build a tool for tool who people who make AI, so visual AI engineers. What I really like to talk about, and I’m gonna ask to Josh to speak a little bit about our business and talk a little bit about the culture.

Remy Schor: What I really like to talk about is from a sort of collective community standpoint – we are completely distributed. We have folks all over the US and Canada, currently just in North America. We’re all within a couple of time zones of each other, so we were able to sync quite regularly throughout the week, and then we communicate asynchronously with Slack.

In terms of the team and how we’ve grown, we are now almost 50 people, which is a really exciting and a turning point for us. Josh is gonna talk a little bit more about just statistically how we’ve gotten there, but I can say sort of spoiler alert that we doubled our headcount over the last 12 months exactly. Both Josh and I are new since then, new in that interim period, but, you know, have been heads down growing engineering and, and some go-to-market strategy hiring as well.

I’ll just circle back to the piece that I started to started to talk about with respect toour distributed environment. We really believe that people do their best work when they’re in a comfortable space, and for most people, that’s some version of home, home office, coffee, local coffee shop, local WeWork, what what have you.

What what we really do by allowing people to work where they’re most comfortable is we meet them right where with, with we meet them where they are with whatever they’re working on professionally and then also personally Whether it’s having pets, I’m not sure if you all could just hear my dog barking, I’m hoping no one could hear her. Awesome. Basically accommodate, right?

We are inclusive through accommodation, whether that’s people dealing with pets, they’re pet parents or they’re people parents or they’re, you know, taking care of of other folks and their families and their lives, managing extracurriculars, right? Continuing to learn, having that growth mindset. And so I think we really have created an environment where all of that is quite encouraged and supported. Josh?

Josh Leven: Yeah. Awesome. Thank you Remy. Hi everyone, I’m Josh, VP of engineering at Voxel51. Lemme just start by giving you just some basics about our engineering team. As Remy said, we’re fully remote all in North American time zones. We have 20 engineers today that are divided up into four squads of three to six people, three to seven.. actually we just hired one.

Our tech stacks are Python on the backend and TypeScript everywhere, but that’s I guess how TypeScript works. We are not so much a SaaS product, we’re primarily deployed into our customer’s cloud or on-prem. Our customers care very deeply about the security of their data and so they prefer to keep that in their own in environment, and so we again meet them where they are to help them be successful. As Remy said, I’m also relatively new, joined about six months ago. Lanny here is the elder statesman in this conversation,

I just wanted to share a little bit about the biggest reasons for me to join and one of those is really the impact that the product has. As you’ve probably noticed, the AI revolution is coming or maybe even already here. What we get to do is enable those teams that are building AI models to build models that are less biased, more safe, more reliable, more accurate. It helps them get this new magic out into the world in a way that helps everybody. And, and we’re helping in a ton of different industries, healthcare, autonomous vehicles, robotics, agriculture, retail, sports, and more that I’m not even listing.

We’re also not just for big companies. We have a vibrant open source community, include students and researchers and academia to machine learning professionals. And, and of course we do have a growing community of enterprise customers. Another thing I really love about working here is we’re we’re not just building a great product, but we’re also making big investments into innovation. Remy mentioned that series B we raised last year and we used some of that money to hire up a team of machine learning, pure research folk led by one of our co-founders, Jason, who’s a research professor at University of Michigan.

And what’s great is that they do this groundbreaking research that we then get to incorporate into the product, and being able to talk about the stuff that they’re doing is, is one of the ways we continue to be a part of like the conversation in cutting edge artificial intelligence. It’s not just for like marketing purposes, not just for the product.

We, we also wanna include everyone at the company. Every week we have a meeting called ML paper review, or every other week, where someone will take one of these papers that’s in the cutting edge of research and present it to the company so we can all grow and learn. All right. With that, let me hand things over to Lanny, one of the engineers on our team working most directly with the machine learning team and she can talk more about what it’s like to work here.

Lanny Wang: Yeah. Hi, my name is Lanny. I’ve been working at Voxel51 for two years and a half. I worked on the open source app and in the enterprise as well. Working in Voxel51, I feel one thing I really enjoy is actually working with people who are actually kind and very respectful. It is just a pleasure to like work with them and we work in a very remote setting, but you never feel like you’re working actually in silo.

We communicate a lot and for me, I feel I actually know all the engineers, not only the one within my squad on every topic. Whenever I think it, people who are relevant always, they’re super happy to when I reach out to them and have a good discussion. Also, the second point is I think we enjoy certain level of autonomy of being able to come up with the solution and the design of fixing something ourself and we have that trust among the team and having that flexibility.

Third, being in the rapidly growing space for AI and I feel in every squad, we’re able to tackle the most up to date problems in the industry. And that for me, like I feel very driven and excited for tackling those problems there. That’s the MLE, like they need the tools they’re facing every day. That get me very passionate and enthusiastic about the problem I’m solving.

Also the company, I feel we value the transparency and clarity a lot.

It’s not only we try to bring that from data insights, but also I feel within the org and engineers ourselves too, we try to have all the docs and meetings so it’s easy to find records even if it happened async, like we’re in there at the moment. Also as engineer, every year I think we can pick a conference to go, and previously I have been going to the CVPR, and today one of my coworkers shared a great news with me. He had a paper got accepted by ICML 2025 related to climate AI. He’s actually a software engineer, he’s not an MLE, so that’s really exciting. And that’s my perspective for working as an engineer in Voxel51.

Angie Chang: Great. This is the time that we normally go into breakout rooms, but I think today, since we have only two companies joining us and one of them is sick/out today, we’ll just stay here and I’ll ask you all more questions that I have prepared. But first I wanted to see if anyone else has a question in the chat or if you wanna raise your hand.

Remy Schor: Can I preemptively say that I’m gonna put my email address in the chat? People are starting to message me with my name. It’s gonna be hard for me to keep up so let me go ahead and put my email address in there right now, and if you do have a question or a curiosity after this meeting, you’re welcome to just shoot me an email directly. That would be really helpful. If you’re gonna do that, include your LinkedIn profile. Thank you.

Angie Chang: Great. So I’m looking through the chat and there is a questio. Are there opportunities for technical writing roles, documentation, or similar. Asked by Nessa.

Remy Schor: Nessa’s note is what prompted me to say let me share my email right now. Let me outline the roles we have open at the moment. Keeping in mind we are a small organization, we are hiring in a very disciplined capacity and we are hiring in a very disciplined capability. We are hiring and we are continuing to hire.

We have a Principal Engineering role open. This is full stack – I need somebody who has React and Python, that that’s the game plan, it’s principal level so they have to have some combination of hands-on coding, a desire to continue to write production code, architecture, design and mentoring. We’re not too married to number of years of experience, but this is a very senior position, so it’s not gonna be appropriate for somebody who’s early in their career.

I am hiring for a pretty nuanced Machine Learning Engineer This is specifically a machine learning engineer who wants to spend their time largely interacting with our enterprise clients. Not in a solutions capacity, right? I’ll clarify, we don’t have a services division, we’re not solving problems for our end user, but we are creating solutions with them. And so that’s really what this person will be doing 80% of the time. This machine learning engineer, ideally computer vision engineer, is relationship managing and, and solving problems with our users.

And then we have an SDR role open, which if y’all know an SDR that’s a sales dev sales development representative, it’s typically gonna be like a pretty non-senior salesperson. Somebody who does a lot of the basic kind of cold emailing, warm emailing, introducing.. If you’ve ever been pitched a something, a software solution, it’s probably those pitches are coming from SDRs. It’s a heavy lifting role, but it’s a really good way to break into software sales. And so typically we’d be looking for somebody with about a year’s worth of experience as an SDR – a little bit more flexibility with that one, if anybody has any questions about those roles specifically, that’s what I’m gonna about to put my email address in the chat to answer, and you’re welcome to reach out directly.

Angie Chang: The next question that I see here is, how does product management fit into AI industries?

Josh Leven: That sounds like a Josh question. Yeah, so I I don’t see who asked that, but just to clarify, you’re asking like, how do we use…

Angie Chang: Susan G?

Josh Leven: Susan awesome. Susan, are you asking how do we use product management to deliver what we’re building? Or are you asking how we see our customers using it? Awesome. Yeah, so we, we use it I don’t think in a particularly innovative way. Our product manager still does the sort of things you would expect, gathering ideas and insights from our customers, from people internal to the company, from wherever else ideas can come from, works those ideas into something more concrete and solutions to actual problems and puts ’em through like a product development process.

They’ll go through design, they’ll get verified, like we’ll put it those designs in front of our customers and get their feedback. They’ll work with the engineers to break it down into tickets. And then of course there’s on the more research side, during that kind of ideation phase, there’s a conversation that happens about where do we see potential research complementing a feature like this?

What’s something we can do to build this feature? Not really like just the way a competitor might build it, but in an innovative way or give it capabilities that nobody else in the industry has. Or sometimes it’s even like, what are we seeing our customers trying to do that we think we might be able to research a solution for them so that they can achieve their goals through us more easily than having to build it themselves. How did I do Susan? Does that basically answer your question? Awesome, thanks so much for asking.

Angie Chang: The next question I see is about the interviewing process from Laura. Curious about your thoughts on multiple round interviews, “some companies have up to six rounds”…

Remy Schor: Yeah, I can take that one. I’m actually just scrolling up to see if I can identify… Okay, Laura. Got it. Yeah, so it does depend on the open role, right? How many rounds we do? I will say that I think we work very hard to be quite thoughtful about utilizing the candidate’s time appropriately, and making sure that we’re getting enough information so that we can make an informed decision. Josh in particular is probably the most thoughtful interviewer I’ve ever had the pleasure of working with. Don’t tell my other executives that I said that. It’s really extraordinary. I mean truly I’ve been recruiting for 20 years and I feel I’ve learned more from Josh in the last six months as a point person hiring manager than probably anybody in my whole career, so that’s been really great. Maybe I should let him answer this question here.

Here’s what I’ll say for a leadership role. Yeah there’s probably gonna be six rounds. We’re we, we don’t, you know, often assign any type of take home technical tests. That’s not really our approach. We want realtime conversational, resembles a day-to-day situation type in interview process. But you know, you gotta meet both co-founders, right? And you have to meet Josh probably twice. He is the VP and if you’re interviewing for a very senior role, I would wanna meet him more than once, right?

He’s gonna be your direct manager. That’s four conversations right there. Plus you still wanna meet at least one or two engineers from the team in some capacity. So there’s your six. If I’m a senior, if I’m a software engineering manager orto some extent possibly this principal engineer, I mean that, to me that feels pretty reasonable even though it sounds like a big number.

What I will say is we, I manage all of recruiting, including scheduling, and I’m relentless with scheduling. Josh can attest, so if there’s a positive signal and both parties are quite interested, even though there might be a number of steps, they can happen rather quickly and we do our very best to schedule them in a very appropriate manageable amount of time. Typically I shy away from setting up individual interviews that are more than 90 minutes. I think that starts to get a little too lengthy, but it’s possible to meet two separate people on the same day.

I will say for anybody in this market right now, and anybody who’s sort of earlier in their career, y’all don’t, y’all maybe don’t know what it used to be like. You used to have to go on site to the company’s actual office, even if you didn’t live in their city and sit for eight, you know, hours of interviews for a whole day. That’s what it, that’s what it was like.

It’s obviously not like that anymore. We do everything virtually and so we make it accessible even though it may feel like quite a few steps for a non-leadership non very senior role, try to keep it to three or four steps. There’s just fewer people to meet at that level. Hopefully that answers your question, Laura. If any clarifications are needed, by all means. For the record, I have nothing to add to that, Remy,

Remy Schor: You remember, Josh, probably back in the day having to put on a suit, go to an office, you know, sit in front of a bunch of people you didn’t know for hours and hours, maybe eat lunch with them. I don’t know, it was like a totally different scene.

Josh Leven: Interviewing virtually is quite a delight. I remember my first jobs were in New York where I did have to put on a suit and then I moved out to California and I went to my first interview in a suit and everyone was very confused and never did it again.

Remy Schor: Yeah, that, that’s true. There are definite coastal differences, and also just, I mean it’s just, everything’s changed now.

Angie Chang: We have a question from Julissa that says, can you talk about the ML AI stack? Are you hosting your own training models or leveraging third party providers? And if so, which ones?

Josh Leven: Yeah, yeah, great question. I may not know this as well as Lanny, so Lanny, please correct me if I get this wrong, or do you know the answer? And you can just answer it.

Lanny Wang: We are data centric, so we actually are open and very flexible., so in people’s AI stack, like we are not the throttle that you would have. We integrate with all the popular tools actually.

Josh Leven: Yeah, exactly. You can easily like load and apply pre-trained models. We have this thing called the Model Zoo that’s full of models that you can just kind of grab and run using the system. And then we have, as Lanny said, all sorts of integrations, but we’re not directly as like part of our system like hosting models on any kind of external provider.

Angie Chang: There’s a question from Garima about a tech program manager role. Is there any maybe opening in the future?

Remy Schor: No, I mean that’s the kind of thing… it’s pretty tough to predict exactly what we’ll be hiring for in 2026. That’s not on the map for 2025, but email me.

Angie Chang: A question from Ashley. What are you looking for as a culture fit?

Lanny Wang: Yeah, I think first of all, like being a genuine person to communicate with because no one like to work with like assholes. And then second being also able to work very independently because we are trying to solve the issues that we’re working with like to a certain degree level because we are remote and so being able to, to get deep into the things and push it forward yourself, that’s and also when there is an issue, I love that in general here, rather than complaining about it, usually people look at, okay, what things need to be done and then we start working on it.

Josh Leven: Yeah, thanks Lanny. I’ll add one or two things to that. It’s really important that we’re building a a culture every new person you hire adds to the culture, right? It’s important we’re building a culture that is low ego.

We’re not looking for people who think they have all the answers, but ones who are good at collaborating with their squad and helping to pull out everyone’s great ideas and have the best ones rise to the top. And yeah, a able to have like really good collaboration and productive conversations, willing to jump in and, and help one another, cause as much as people do like to get heads down when they run into an issue, they’re quick to post it on Slack as they should be, and there is always an outpouring of people be like, “Oh, have you tried this? Have you tried that? Lemme jump in…” and it’s really important.

What we’re building is complicated and building it to work with every possible customer scenario makes it even more complicated. And so there’s a lot of wisdom on the team, people who’ve been there a lot longer than me who are able to help everybody navigate that.

Remy Schor: I’ll just add a personal theory or philosophy I have is that if somebody has demonstrated the capacity to care deeply about something in their life, right? And whether it’s an extracurricular, could be a sport, could be they don’t even have to be playing. Like they absolutely love the Lakers. Like if they, if somebody demonstrates to me in the first conversation that they have passion for something, I believe then that they can have passion for their job. And so that’s like a really good signal for me typically.

If there is an opportunity in your interviews to just tie something back to what you do on the weekends, right? The manager mentions a book that you happen to have read or something like that, right? You know, even if, even if you’re just asking the person, Hey, what do you do on the weekends?

What are you looking forward to doing this weekend? And you can tie that back to what you do personally for me, that’s a really good signal. I do look for that. It’s, it’s part of what differentiates people, right? And people hire people, so be a person.

Angie Chang: Okay. I am gonna ask a question about the hiring process. Does Voxel51 focus primarily on visual AI and computer vision models, from what they saw on the website? Or do you also work with data models in other AI domains?

Josh Leven: Yeah, great question. So right now we are 100% focused on computer vision use cases. Is that always gonna be the case? Can’t say, but, but right now that is really our focus. And I can say this confidently, that’s gonna be our focus this year, but we’re always having conversations about other places we can expand into. It’s a really exciting space, and the the kind of things that we do, which is basically help to people to leverage their data to build great models is not specific to visual AI, so there’s a lot of opportunity there.

Angie Chang: Great. So question from Laura about the interview process. Wait, did we already do that? Sorry, it just keeps jiggling this little chat window. A question. Can you from Abigail, could you talk a bit more about the AI privacy or security issues you’re tackling?

Josh Leven: Hmm. So Abigail, when you say the ones that we’re tackling, do you mean I feel like I’m asking the same question I asked before, Are you saying like the privacy and security issues that we tackle for our own software, or how we help our customers with the privacy and privacy and security of the AI they’re building? Sure.

Because we deploy everything into our customer’s clouds and into their prem, our issues about AI privacy and security aren’t so big. And the, I mean certainly when we make our own models, we’re very thoughtful about what data we’re using to train it. I mean, using data to train models is kind of the thing that we do and help to do, and we’re certainly like not taking proprietary data or we’re, we’re not like we’re being very responsible about the data that we use to, to train the models that we do, but the, the application that we make full of the, the pre-trained models and the models that our customers are making using the data, it’s because it’s all on-prem.

We don’t have to worry so much about their data, or data leaking through our product to go anywhere. In fact, we have a number of customers that have like a fully air gapped solution that of ours that they use. I guess one of the things that we do is we build an air gap solution, so to just kind of eliminate any concerns the customers could have about how we’re handling privacy and security, which I should add the team built before I joined and and was a huge effort. Lanny and the rest of the team should be very proud of that. It’s not most companies that at our scale that have an air gap solution and it’s been a great advantage for us in the industry to be able to offer that.

Angie Chang: Someone asked about technical interviews.What are your thoughts on using HackerRank style interviews, given AI tools like Copilot, something developers use on a regular basis for a developer productivity?

Josh Leven: Oh, can I..

Remy Schor: Well, I was just gonna say, I just don’t care for HackerRank style interviews. I think they don’t appropriately mirror the day-to-day life of an engineer looks like. Furthermore, it’s essentially, it’s a test you can prepare effectively for HackerRank style questions or LeetCode style questions, but I don’t really think it’s doing anybody any favors.

I will say, and maybe, I mean I want Josh to answer this ’cause he’s excited and that makes me happy, but if you’re ever using AI in an interview, the interviewer knows, it doesn’t matter if you think they don’t know, they know. Now, they may have said you can, which is fine by all means, but you’re never like getting away with it just FYI I don’t think that Deepti that you’re trying to, I’m just saying like for everybody, overwhelmingly, if you are as a recruiter, what I see a lot is, I’ll jump into a first conversation and the person won’t have done research, which is a different conversation for a different time, and I can see that they’re looking us up real time and reading to me what we do.

And I have to time out. I don’t need you to pitch me right, tell, I have to like backtrack. So I, we can always tell when you’re using your computer to look up something up, whether it’s AI or not, Josh.

Josh Leven: Yeah. So to totally agree with that. I’m very much against those sort of HackerRank/LeetCode style interviews, with or without AI. When I think of technical interviews, my goal is to put you in an environment that is as similar as possible to what you’ll be doing day to day, right? So if you code with AI, then you should be coding with AI, right? If you’re normally able to use whatever libraries you want, and Google answers to things, and talk something through with somebody else, then all of that should be available to you in the interview.

And so that’s kind of how we like to structure the coding part of the technical interview.

It’s like as close as possible to like pairing with a colleague in your own environment on the language that you’re most comfortable with on a problem that like is not a LeetCode problem, that we can have a conversation about trade-offs and software design and like all the sort of things that you normally have conversations with your teammates about when you’re actually completing a ticket. And that’s what the technical interview’s about for us.

Angie Chang: I guess we can go on to our questions that we came up with, if nobody else is gonna ask questions, I’ll ask questions. What are some qualities and experiences that make someone successful at Voxel51?

Remy Schor: Yeah, I mean I think it’s a combination of some of what we’ve already shared. Certainly, curiosity, right? Definitely, passion for what we’re building. I don’t know that you have to come in with that. I mean, it certainly helps, but once you get the lay of the land, like really diving in and, and wanting to be here, and wanting to be part of what we’re building, being kind and thoughtful, I think to be sure, you know,

I am nine out of 10, 99 out of a hundred times the very first person that somebody interacts with with respect to Voxel51. And so from a candidate standpoint, and so there are some things that are important to me, right? Like, I don’t care if you’re running late, I do need you to let me know, right? As an example, right? And again, things happen, issues pop up occasionally I’m running late, right? Like I get it, we all have, but that being like transparent and communicating is really important.

I had another point I was gonna make, well I mentioned curiosity because I think that’s the big one, really understanding the why behind what we’re building and then kind of bringing your own, bringing your own why to the table, Josh?

Josh Leven: Yeah, the that’s great. The, the only thing I would add is, we are still very much a startup. We’re not planning like multiple quarters ahead in detail, although only we have like a broad roadmap plan. Like things come up in like a partnership or a customer, and we surprisingly need to drop everything and jump on that. So having a certain level of flexibility if you’re used to more of a big company job where things are all laid out and nothing ever interrupts your sprints, like we do everything we can to not interrupt the sprint, like we do try to respect that, but, much more than other places, things are gonna come up, and people who can get excited that, “oh man, you know, if we switch gears right here, we have this huge opportunity,” you’re gonna be a lot happier than if you get frustrated every time something comes up.

Angie Chang: We have a question from Abby. What types of companies do y’all hope to work with, and what tasks are the AI used for?

Remy Schor: I mean Oh yeah, Lanny you go.

Lanny Zhang: We work with a wide range of industries from autonomous driving to the defense, and from modern agriculture to robotics, so it’s very satisfying. Like sometimes seeing the customer success engineer post… the abstraction of the problem, like the customer encounter and see the scenario that like we were able to help. Yeah. It doesn’t… we don’t really have a specific setting or a specific industry that we’re anchored to. It’s really a wide range of applications that can on issues that we can solve from visual AI. Any AI industry that work with visual images, videos, 3D point cloud, et cetera, we can work with that.

Angie Chang: I have a question… What ways does Voxel51 engage with the open source community to drive the data centric AI revolution?

Josh Leven: What a well-phrased question. First, every way. Yeah. Sorry, Remy, did you wanna start answering?

Remy Schor: In every way. But Josh, you go ahead.

Josh Leven: Oh yeah, I mean, I’ll name a couple of ways. Like we, we have a vibrant Discord that we maintain to like support people in their 51 journey. We have a whole bunch of events, meetups, hackathons, man, actually trying to think of all the stuff, like we have a whole community slash dev rel team that just spends a hundred percent of their time supporting our community. I couldn’t possibly… someone else jump in and remember all the other things that they do.

Lanny Zhang: Yeah. And also on GitHub, we actually have a very active community. We have this thing called 51 plugins that allows to transform. So a lot of the MLEs, they know Python really well, but they don’t write React or Typescript, but they hope to use the app to make a little tweak and then they can use it better. So that plugin system allows them to use Python code to generate that UI to build their unique workflow for them and they will share it on GitHub. So that allow us to see, hey, what people are, are working on, what’s the need? And we do work with the engineers there to just bring the new features in and merge new things from the community, so it’s a very active community.

Angie Chang: Out of curiosity, why is the product called 51?

Lanny Zhang: I listened to one of the podcasts done by Jason and Brian and they did talk about the name Voxel51. Where it come from Voxel is the pixel in the video setting, a three dimensional setting. And then 51 came from the unknown, the alien zone 51. So it’s meaning that we’re exploring some unknown. I think that’s the true answer.

Josh Leven: I was given a different answer years later when I joined. Voxel still means voxel, a 3D Pixel, but I said, you know, our product helps you find a needle in a haystack. So you know, if you have a thousand needles, which needle is it that you’re looking for? Maybe it’s needle 51.

Angie Chang: Okay. From Angela, what is the workflow from customer request to end solution? Is the data a mix of synthetic and annotated? And as a part of that process, are you also working with human annotators?

Josh Leven: Okay, I think I can answer this. If you’re talking about what does the customer, what’s the customer workflow as they’re using us to solve their problem, and how does annotation connect with that? Am I getting that right, Angela? So when you say customer request, it makes me think like they’re asking us to do something, but really it’s, I think it’s, yeah, so I’m, I’m gonna answer that question.

Customers, they come to us typically because they have a ton of data and they want to use that data to make an AI model. Sometimes they’ve been trying to make an AI model with that data and they just can’t get it accurate enough. It’s got blind spots, it has issues and they need our product’s help to get it over the finish line. And so they use our product to explore the data and understand stands, right? So, you know, there’s training data and test data and so they’ll look through the data to see like what’s missing in their training data that is preventing the model from learning the things it needs to learn to have a full solution that covers all cases, is like less biased, is accurate in more situations.

And so our product helps to kind of highlight those gaps for them so they can figure out what additional data, for example, they need to get labeling for. And then they can label it and then add it to their training set. And then they use that to train the model and then they check the accuracy of the model again. And there’s this like virtuous loop. As the model gets better and better, then we highlight more and more subtle areas where it can improve and they get more labeling and they improve and they and so that’s, that’s kind of the cycle there.

We’ve got some cool things in the works for how we can help support the annotation side of that that we’ll be announcing later this year. But for now, we really kind of stay out of the annotation business. We are partners with a whole bunch of annotation companies and so when it comes to the annotation part, they’ll just ship the metadata over to to them and they’ll get the labels and they’ll import the labels over to 51, and the cycle continues.

Angie Chang: The next question from Abigail is super loosely around what percentage of employees at Voxel51 are women or or non-binary?

Remy Schor: 25%.

Angie Chang: Great. So that’s the last question I see in the chat. I’ll ask a question that we had prepared. Does your company support lateral career moves such as switching between engineering, product or management roles?

Josh Leven: Yeah, absolutely. You know, it’s a very much case by case basis, but we’ve had people move in many directions. We’ve had conversations with people about movement as well. It’s my job as a a leader and the managers of my team, it’s our job to support you in the growth of whatever direction you want to take your career. Hopefully that’s something that we can do within the company, but you know, if not, then I think part of our job is to help you make that leap from us to the right place for you to continue that growth.

Angie Chang: And how does Voxel51 support in new employees during the onboarding process?

Remy Schor: Well, I think bigger picture, it’s maybe not yet totally a scalable process because our COO, who’s incredible, spends like the first half of the first day with every single person who starts. There’s only one of him and he has 100,000 other responsibilities, so I’m trying to talk to start the conversation about how we can make more scalable iterative onboarding. But it’s going to be a combination currently of our COO, getting the person situated on day one and then handing them off to their direct manager and the manager will takeover setting up, you know, ensuring that they have all their appropriate one-on-ones. They meet everybody, they get ramped up, they have the right curriculum.

Another thing that our developer relations team does earlier, someone had asked how we interact with our open source community. And the answer is, you know, as Josh and Lanny both, both said, there’s a lot of avenues, one of which is we have a dedicated computer vision dev rel team. They create a lot of curriculum and content. So even before someone starts, if they want to, they can like watch the Coursera course on Voxel51, they can checkout our LinkedIn Learning Lab. There’s resources out there. I believe that those also help with the onboarding. We do twice weekly all hands, so no matter when you start during a week, typically it’s a Monday, you’ll get introduced to the entire community real time virtually, and there’s like a whole series of like shoutouts and introductions and stuff.

Angie Chang: I’m gonna read another question from the chat, Melissa asked how does Voxel advise customers on infrastructure as well as eg computing, power, memory storage, and how often do infra needs change as models, amount of models, or nature of model data grow?

Josh Leven: Yeah, this is a great question. We do absolutely there.. That’s a big part of the onboarding process for new customers is advising them on how to scale their infrastructure and helping them to get it right and helping them to adjust it over time. As those needs change, it’s absolutely something we do. We have a infrastructure team and that helps set those kind of standards and advise and as we continue to develop, like this year we have a big initiative for performance, we revise those guidelines to say, oh, you know, if you wanna take advantage of these performance improvements, you’re gonna need more cores on these machines and yada yada. So it’s an ongoing developing thing that is a central part of how we setup customers for success.

Angie Chang: A question from Abby, looking at y’all’s open source library and GitHub, can this tool be used to process non-visual data like observability metrics or complex texts?

Josh Leven: So with the caveat that Lanny mentioned that we have a very powerful plugin system that you can do quite a lot with. The core product right now is just images, video, 3D meshes, point clouds and other kind of visual media. But your question leads us to the same thinking that we have.. the same approaches we’re taking could be expanded to other formats.

Angie Chang: Thank you for that. Angela asks and says thank you, it helps on the scope of the product. She notice defense work as part of the modeling. Are you also looking at red team and pixelated attacks? Are you also suggesting emergent models to clients?

Josh Leven: As far as like emergent models, if I’m understanding that correctly, we, the Models Zoo, that I mentioned, we try to stay pretty up to date on that, so whenever new like industry models get released, we are quick to add them to the zoo so everybody can get access to them and run them really easily inside of 51, we the, like the customer success team and Remy was talking about, we’re looking to hire one more person for that team. They certainly do advise all of our customers on best practices and strategies and approaches that they may want to take to help make their work as successful as possible. They are more expert in me on what strategies they recommend when, so I can’t answer that particular question.

Angie Chang: Athena asks, how doyou maintain transparency and collaboration while managing a remote team?

Josh Leven: So I don’t wanna just assume I should talk, but…

Remy Schor: Go for it.

Josh Leven: Okay. Thanks. Maintaining transparency is like a constant vigilance. I think that’s like, part of the responsibility of leadership, to go out of their way to be sharing context, and being transparent about decisions and directions and possible directions. That’s just a kind of decision that we make at the leadership team. One of the reasons I was excited to join as a VP here is because I knew that was a core value of the leadership team and it’s a basically a non-negotiable for me. I don’t know how to lead a team without being transparent, so I think you get just like, everything just gets easier if you’re willing to put in the time to be transparent with folks. I guess the rest of the leadership team agrees, so we do that.

Collaboration remotely is tricky and it’s something that we are always talking about and iterating on, particularly remote across different time zones, and I think part of it is just figuring out what are the key touch points where collaboration is most valuable. We do our regular sprint ceremonies, and like planning out the work we’re gonna do for the next couple weeks in a sprint is an important touch point for collaboration as well as figuring out like, where do we need more collaboration in order to figure these tickets out, or come up with a plan or, one of the squads, the backend squad is doing a lot of tricky performance work right now, and there’s a couple people get together and do a brainstorming session, write up a doc, and then that they’ll share the doc and then everyone comes together and discusses the doc and gives feedback.

It’s really about just like creating the right habits and processes and figuring out those touch points. I’m always a big fan of pushing for just code pairing and and just sitting next to each other virtually and pairing on a problem, whether it’s coding or writing up a doc or whiteboarding or whatever. And then, like I said, time zones become tricky because all right, well one person ends their day at 2:00 PM the other person’s day, and so someone’s working solo for three hours, and then the other person you’re pairing with wakes up three hours before you, and so making sure you have a clean handoff and a plan. It’s a lot of communication, a lot of thinking ahead, a lot of just being thoughtful.

Angie Chang: Thank you for that. I see a question here from Julissa. Is the open source project the same one offered to clients? And how important is the open source aspect to the product?

Remy Schor: I mean, I’m not an engineer, but I’d be happy to jump in and answer this unless Lanny wants to take it. Maybe I’ll give my answer and Josh and Lanny can, can hold me accountable in case I’m missing something. This is what I typically tell candidates. We are open source, we remain very committed to being an open source community. Our open source tool is single user and local install, so it’s quite limited in the sense that you can only work with a visual data set as large as what your laptop can handle.

Three-ish years ago, we launched our enterprise solution. The enterprise solution is how we’ve monetized. The main feature differential right now is that it’s a teams version of the open source tool. It’s a collaborative tool that also allows you, I mean in so doing it allows you to work with your team in your cloud in the same large scale visual data sets, which is kind of solving its own problem, but that’s the ubiquity, it’s more scalable, it’s more performant, more secure, right? There’s enhanced security and you know, forthcoming additional features. But that open source product, while sort of part of who we are just at our core, also drives and energizes users into the enterprise tool, right?

Individual engineers find the open source tool, they love it, they energize, and a lot of cases have in fact come to us asking to uplevel to the enterprise solution. There’s still obviously a sales cycle, but it’s nice when they’ve already heard about us, they already know they like the tool. I miss anything?

Lanny Zhang: Yeah, and I think previously it’s… we emphasized more on the collaboration, user management and security side, but I think started from this year, we added more advanced features that for instance, with data quality panel and model valuation panel, these advanced features will tap better into the enterprise solution for industries, better scoping their specific problems. Yeah. But we remain very committed to the open source community.

Angie Chang: So the eng team sounds very collaborative. We’d like to dig into the culture a bit deeper. Are there any intentional or surprising steps Voxel51 has taken to create an inclusive and supportive environment for women? Or parts of the culture that you’re just really proud of?

Remy Schor: The biggest thing is we have this really remarkable COO, our executive team of course is awesome. Our COO Dave is particularly involved in just sort of the day-to-day operations of course, and he’s really committed to continuing to leverage whatever tools we need to up level communication up level, for example, our recruiting efforts with respect to women and non-binary folks, and you know, people who have been sort of historically repressed in some way.

I feel like we’re still figuring out, and I think there’s no solution, there’s no one right way to ensure that in organizations both inclusive and welcoming and comfortable for people. But I think a good start is that, we are committed to dedicating the resources to improving, right? We tap third party resources. We’ve brought in some programming that has helped us kind of shore up our internal communication a little bit and, and kind of work in a more collaborative capacity. Yeah, that’s just the beginning. With just 50 people, I really do feel like we’re just getting started.

Josh Leven: Yeah. I I just add one thing. Initiatives are great and important, but I think what it really comes down to is how it’s incorporated in the everyday. I think every time we’re like designing an interview process or running a meeting or whatever else we’re doing day to day, there’s a part of us that’s thinking like, how do we make sure we’re doing this in a way where yes, women feel comfortable, but also, you know, quieter people feel comfortable. Or people whose English isn’t their first language feel comfortable, right? Like inclusivity isn’t, like, initiatives are helpful, but what it really comes down to is are you like being thoughtful in the day-to-day things that you’re doing?

Angie Chang: This question about, how does Voxel51 one’s mission to bring transparency and clarity to the world’s data influence your daily operations and decision making?

Josh Leven: Does it honestly influence our day-to-day operations and decision-making? I think it’s a fair question. Like, is that a day-to-day question? When I, when I think about a mission statement like that, I think it’s right at the core of the more strategic stuff that we do when we’re talking about like of the different things that we can do in 2025 to take the company to the next level, right? Like there we talked about all sorts of different opportunities of like different things we could build and different types of customers we could go after. And, you know, considering those options, bringing clarity to the world’s data is a helpful thing to help us decide between when we’re making big decisions.

Angie Chang: What tools and platforms does Voxel51 utilize to facilitate communication and project management?

Lanny Zhang: So we use Jira a lot. All the status are in sync through Jira and there has been some Confluence articles, there are lots of video records in our Google Drive. Also the Slack channel. If there is certain domain I haven’t touched for a long time and I need something, I usually just start through the Slack. Usually other people have already brought it up. Yeah, I would like to think myself as part of the more quiet side and there hasn’t been any like problem, I feel communicating or being able to communicate what I think of very honestly, like, feel very safe environment to work in.

Angie Chang: For the Principal Engineer role that you have open, how does this role contribute to the development and scaling of your enterprise solutions, especially processing large scale visual AI data sets?

Josh Leven: Wow, I … that’s so specific. I hope that was taken right outta the job description. ’cause you really understand the role. The role is targeted for one of the two squads that is most focusing on performance and reliability this year. One of the challenges that the company is tackling in 2025 is that the size of data sets that our customers are using is really starting to explode. Where we would talk about like a hundred thousand samples in the data sets or maybe 500,000. Now we’re talking to companies who have 50 million samples in the dataset where they’ll have a data lake with a billion samples in it.

The challenge that that squad is tackling this year is how do we bring all of the power of 51 to data, which is now several orders of magnitude more than the code was originally intended to handle. The challenges there are everything from infrastructure to backend to front end, because even when you figure everything out, there’s still an enormous amount of data that you wanna show on the front end, and you can’t show it all at once.

VOXEL51 IS HIRING!

Please consider applying at the links above!

Got questions? You can email recruiter Remy (remy@voxel51.com) or connect with Remy on LinkedIn, and/or email VPE Josh (josh@voxel51.com) or connect with him on LinkedIn.

From an open source project to an enterprise product, Voxel51’s visual AI is used worldwide in academic research labs, startups, and Fortune 10 companies. The engineering team is growing!

What if a Girl Geek Dinner was at your office / space?

What if a Girl Geek Dinner was at your office / space?

Margarita Akterskaia, senior software engineer at Roblox, discusses

Margarita Akterskaia, senior software engineer at Roblox, discusses  Olga Khylkouskay, a Staff Software Engineer at Google showcased the power of

Olga Khylkouskay, a Staff Software Engineer at Google showcased the power of  #3 –

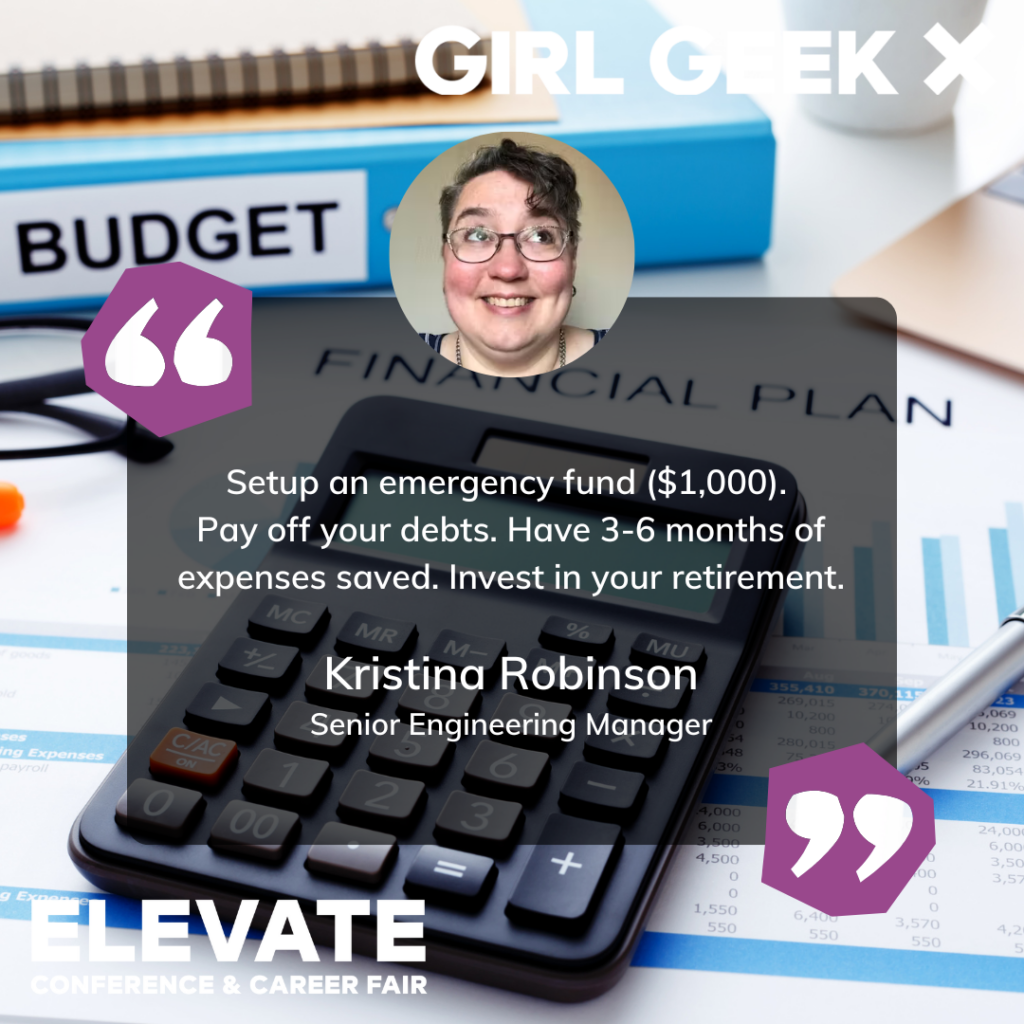

#3 –  The conference theme is “Lift As You Climb.” Speakers from Google, Slack, Cisco, Shopify, Splunk, and more companies spoke and answered questions, sharing career advice with attendees during their sessions, often welcoming connections on LinkedIn.

The conference theme is “Lift As You Climb.” Speakers from Google, Slack, Cisco, Shopify, Splunk, and more companies spoke and answered questions, sharing career advice with attendees during their sessions, often welcoming connections on LinkedIn.

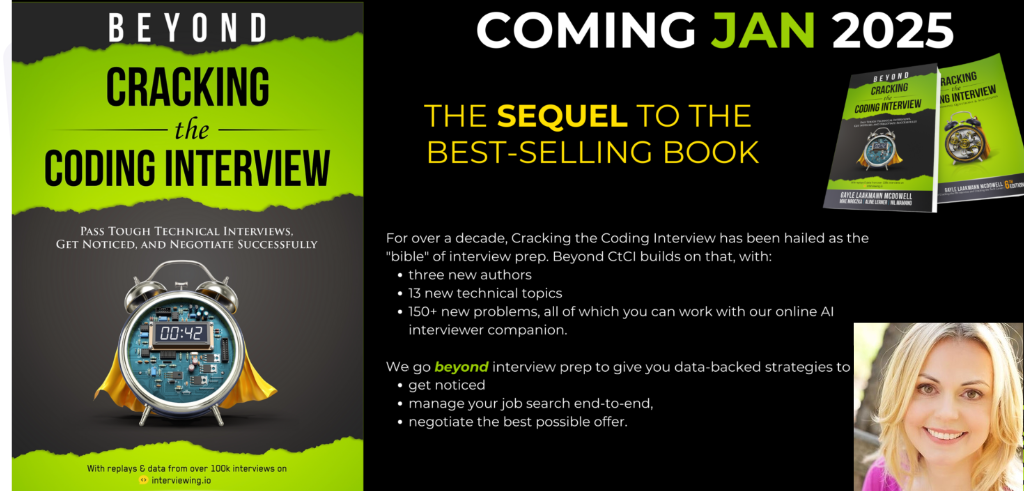

After acing her coding interviews at Google, Apple and Microsoft, founder and author

After acing her coding interviews at Google, Apple and Microsoft, founder and author

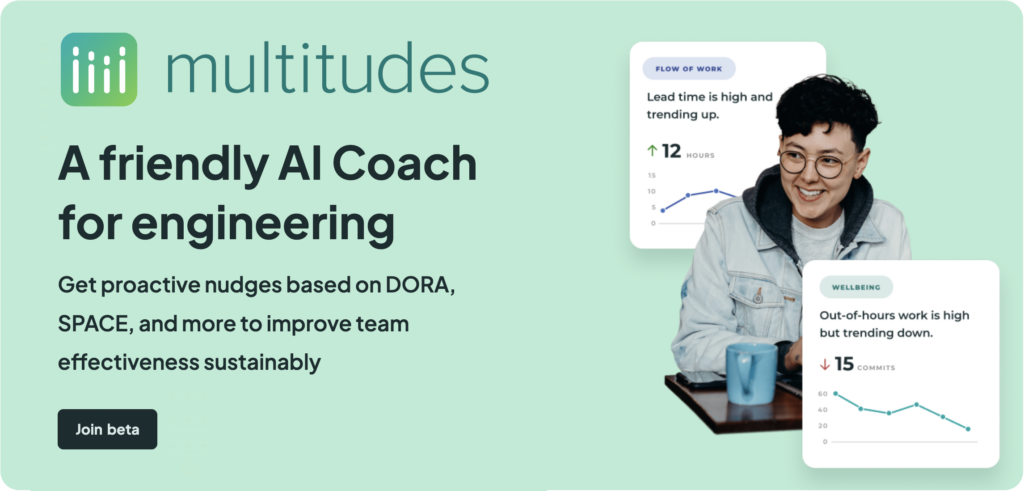

Founded by

Founded by

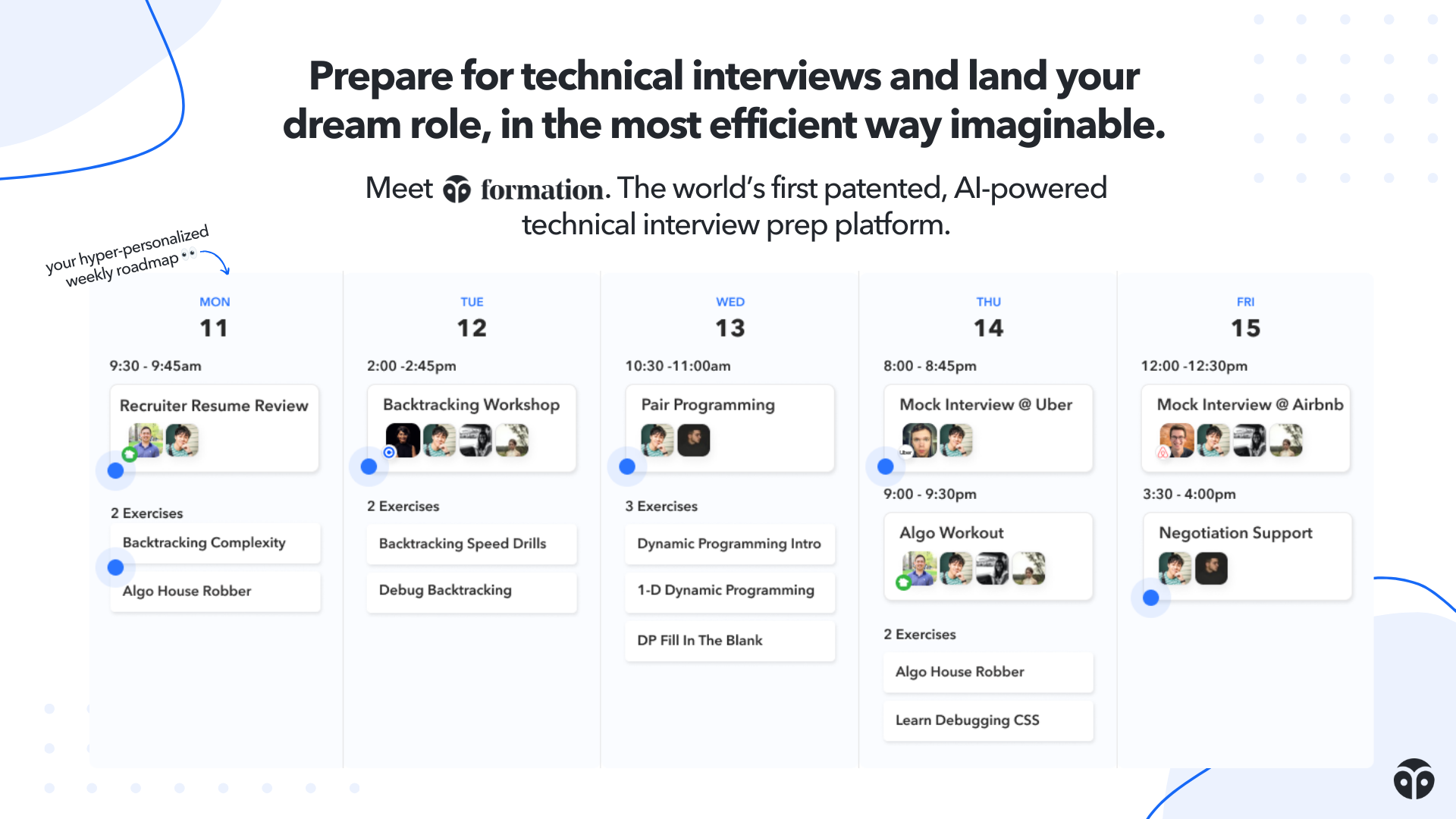

Let’s make your career dreams a reality together.

Let’s make your career dreams a reality together.